GuitarFace

Welcome to the GuitarFace Wiki! Check out the Github repository for GuitarFace here: https://github.com/ginacollecchia/GuitarFace

Why is this project called guitar face? It started with a feeling:

Oh hey there Brian May! I bet you're playing some sweet jams. Here are some other amazing guitar faces. Try to notice similarities between them.

Gary Moore, amazing

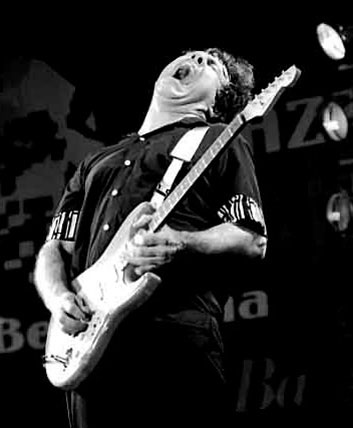

Ritchie Sambora, squeezing one out

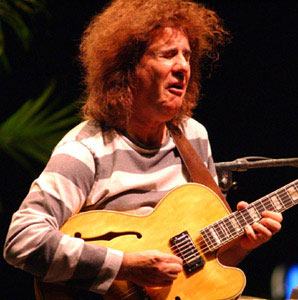

Pat Metheny is trying, people.

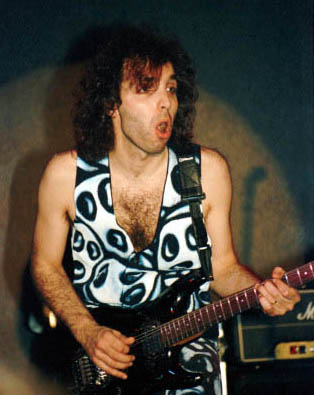

Yngwie! So many nooootes.

Joe Satriani, stunned

Similarities:

- open mouth

- closed eyes

- scrunched face

- raised head?

With these observations, we believe there might be extractable features of a guitar face. GuitarFace seeks to pair musical data from a MIDI guitar input with computer vision in order to trigger events in a visual environment, so that guitarists (and other musicians alike) can get real time feedback of their playing, and fun rewards for their practice sessions.

Goals

- To detect the facial features of the guitar face using OpenCV

- To make a visualizer of musical MIDI data that records and rewards user input in real time

- To be able to compare sessions against one another and track individual progress

Assumptions

In our design of the musical data that we'll be providing to players, we make a few assumptions about what musicians want. Our model is similar to an exercise routine, where the user could set goals / thresholds for their practice sessions, such as:

- duration of the session, number of notes played

- count of big jumps between pitch (adventurousness, comfort with pitch)

- count of pitches in the key, with key set by user at beginning

- count of notes on the beat, with tempo set by user at beginning and a metronome feature

- count of vibratos

- count of pitch bends

- count of slides

- dynamic range (moving / smart)

- pitch range

- stage presence, guitar face

- fretboard heatmap: where are you playing most frequently?

- repetition of pitch sequences

- clarity and consistency of dynamics and intonation

- mixture of divisions of the beat (i.e., all quarter notes = bad)

Storyboard

MIDI data is input in real time from a MIDI guitar pedal unit, the Roland GR55. The data isn't perfect, but the software is designed specifically with guitarists in mind.

User

Musicians, specifically guitar players, interested in knowing more about their practice sessions. This can be used by any instrument capable of producing MIDI output. The output can serve as a score, though we don't really think of it primarily that way; we're more interested in the statistics and progress one makes toward set goals in their practice time, such as the change in duration from session to session, or pitch and volume content.

The product

The product will use a support vector machine (SVM) to input MIDI data (tablature -> Guitar Pro -> MIDI) and feature vectors and output a classification. The product should have a few modes: practice and test, for example. During practice, one could see the raw data, and evaluate things in the feature space, such as timing, moments of vibrato, intonation, and more. At this stage, they could also scrub their data and extract a musical score.

Test would output a health meter as the guitarist is playing, i.e., the software is analyzing the solo in real time. This idea points to a gaming context, where 2 friends could duel and see who comes out on top, over a range of different categories (who has better technique? timing? pitch ranges/jumps? etc.).

Libraries and previous work

- OpenCV: http://opencv.org/

- Roland FriendJam, for use with their MIDI sensors / pedals / interfaces: http://www.roland.com/FriendJam/Guitar/

Relevant papers

Sensors / Accessories

- Godin MIDI guitar

- Roland GR55 guitar MIDI interface / pedals

- Computer camera to detect guitar face

- Lighting for the face?

- Accelerometer / Game-Track to track hip gyration?

Testing

Team

- Roshan Vidyashankar

- Gina Collecchia

Milestones

- Week 1: OpenCV compilation, research; most of MIDI code; graphics setup

- Week 2: OpenCV feature detection progress; MIDI + graphics integration and design; make the thing work, basically

- Week 3: UI/UX tweaks

- Week 4: Code code code